Wow!! It must have been a busy week for the OCI developers. The last week of February was full of new feature updates to the OCI platform. Let’s have a look at some of this goodness 🙂

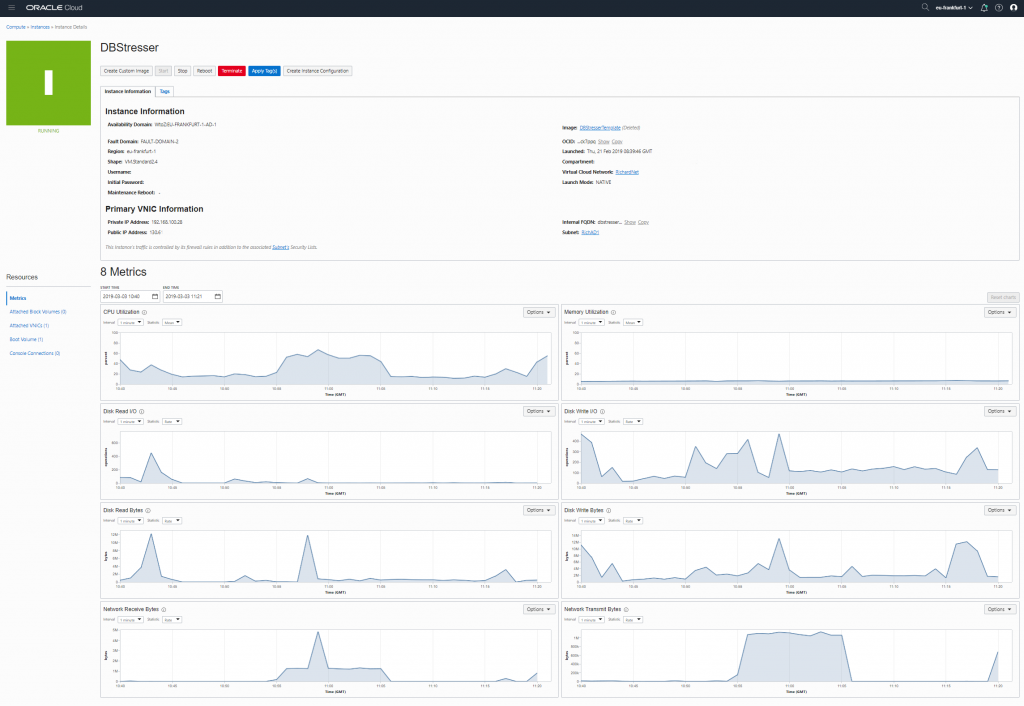

Performance Metrics

On your Instances (VMs and baremetal) you now have full performance metrics available in the cloud portal and thru the APIs. This allows you to have quick inside into the state of your instances, not only at one point in time, but including full historic data. There are stats available on CPU Utilization, Memory Utilization, Disk R/W I/O + Bytes and Network Receive and transmit.

BTW the performance metrics are not only available on Instances, but also on other objects like the Load Balancer service. It can then provide metrics like inbound requests, active connections, unhealthy back-ends, HTTP responses in 200, 300, 400 or 500 range and more.

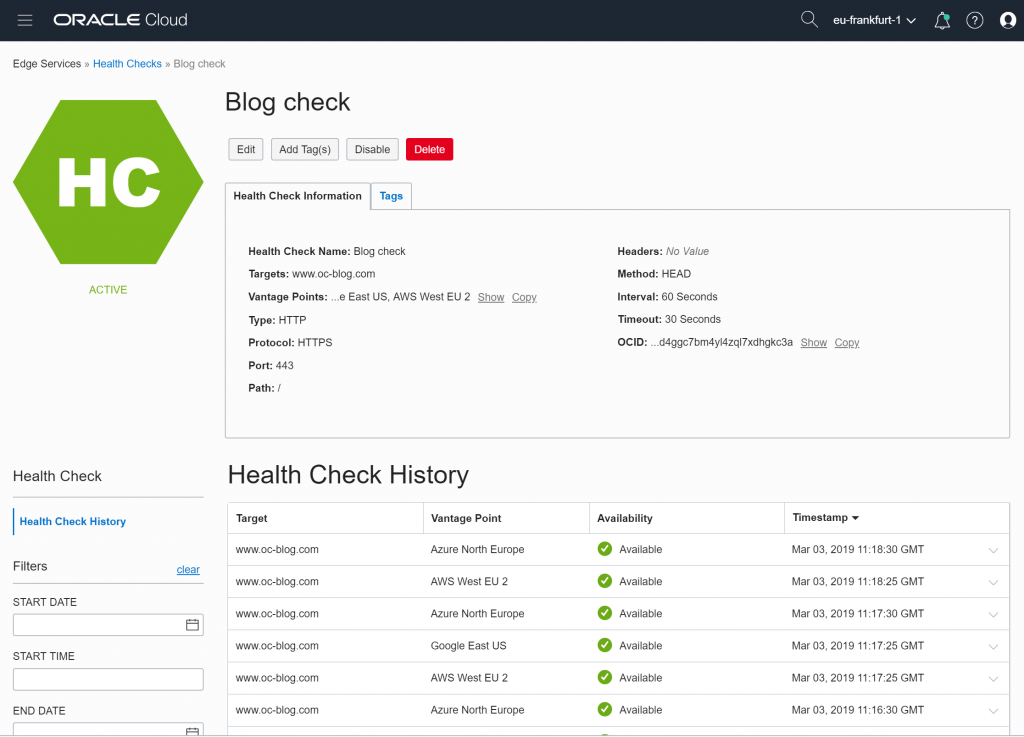

Health Checks

Besides monitoring your instances from the inside, there is also a great new feature that allows you to monitor your instances from the outside, thru various vantage points. With health checks your can monitor any service, no matter if it is on OCI or anywhere else on the internet thru HTTP or ICPM/PING requests. The health checks / vantage points can be chosen on various location from inside AWS, Azure or the Google Cloud.

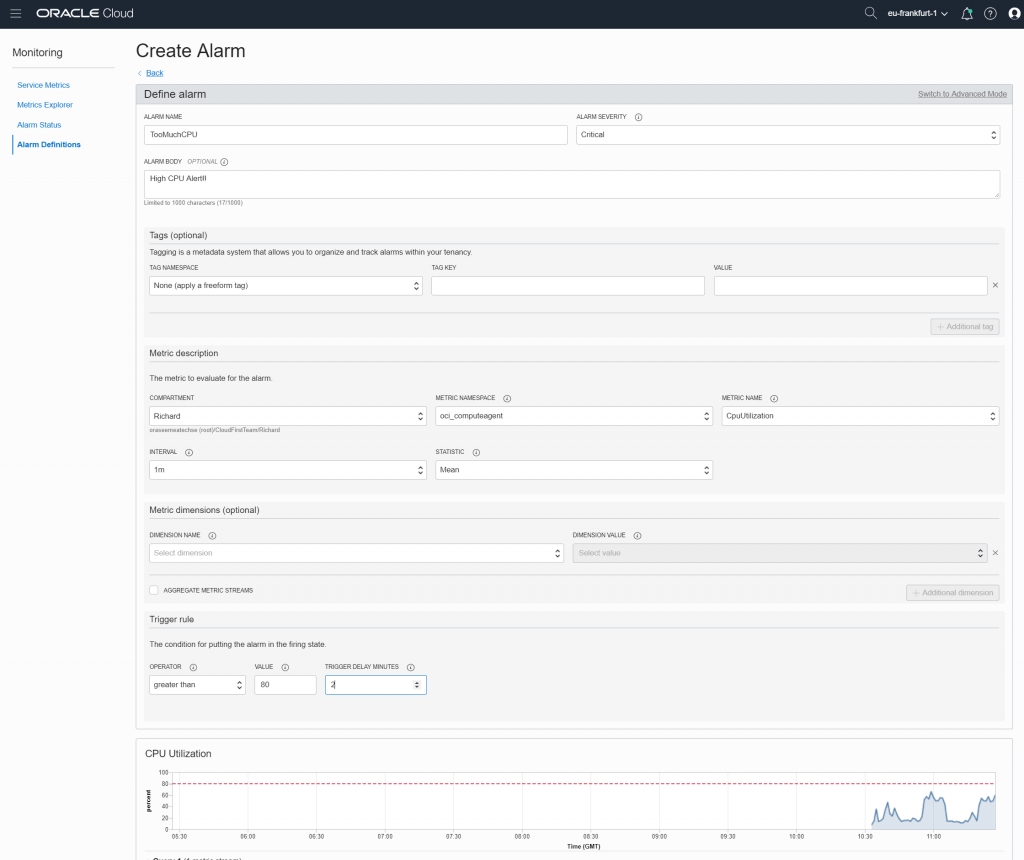

Alarm Notification

With the Performance metrics and Health Checks you can monitor from the in and out side and with the new Alarm Notification service you can then trigger specific alarms based on the status of these metrics and health checks. The trigger can fire off an email or you can use the integration with pagerduty.com

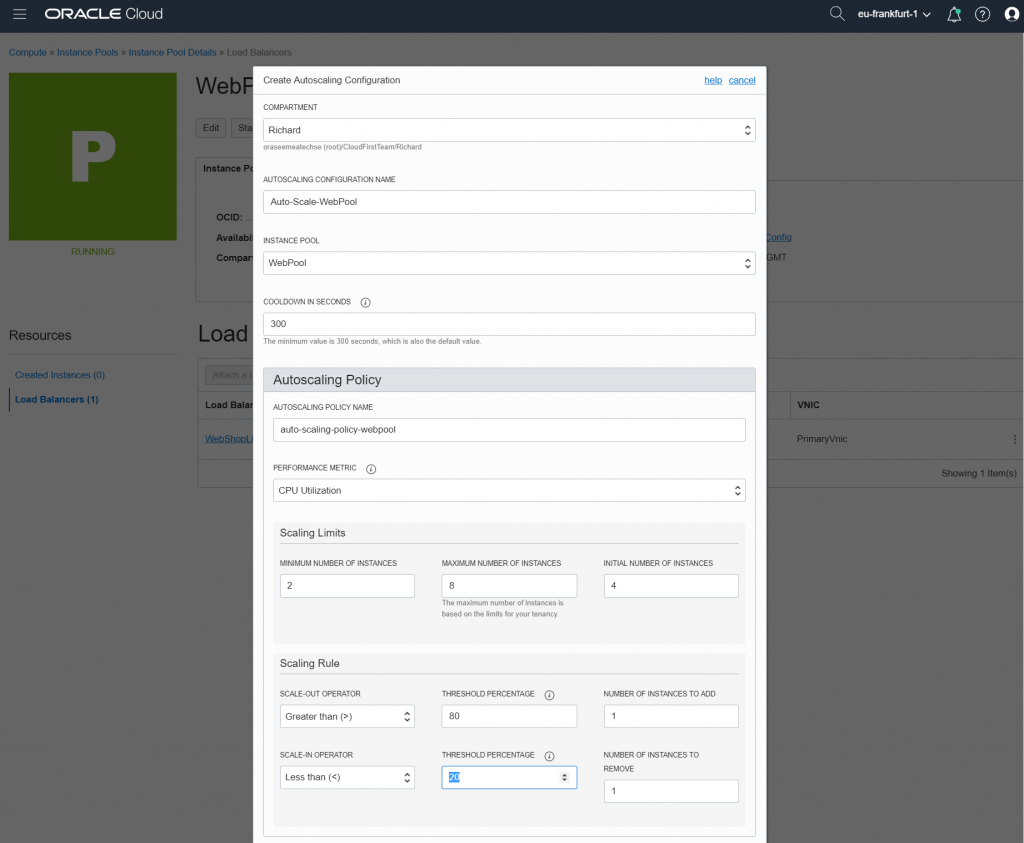

Instance Pool updates

In OCI there was already the feature to create a pool of instances, for example based on one template webserver, you could easily scale-out to 10 of these webservers with a single click. Using the new performance metrics, you can now automatically scale out/in of your instance pool! You can set thresholds that will increase or decrease the size of the instance pool automatically.

There is now also full integration with the Load Balancer service. The instance pool can manage the load balancer service automatically for you. When you instance pool increases, the new instances will automatically be added to the load balancer back-end and when the pool decreased the instances are automatically removed from the load balancer back-end.

New Resource Manager Service

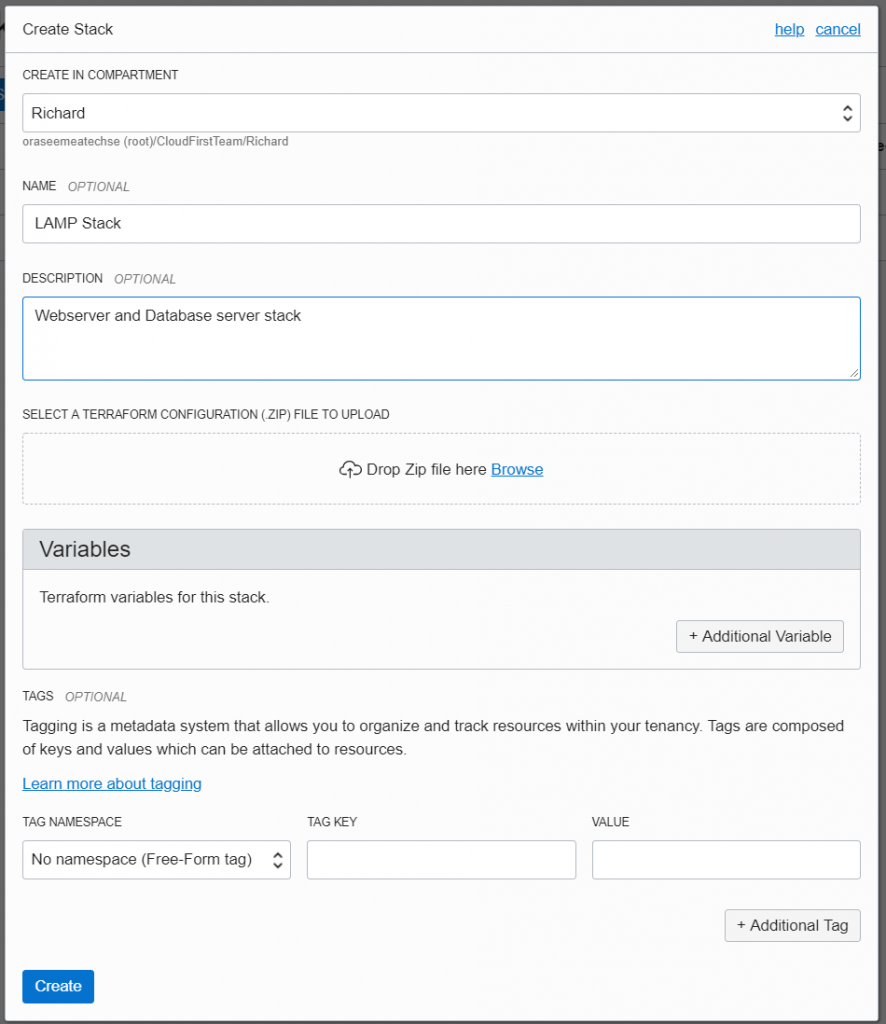

The new Resource Manager Service allows you to deploy ‘infastructure-as-code’. The service supports Terraform configurations and based on those automatically create jobs to deploy or upgrade them. So if you want to deploy based on Terraform configurations all you need to do is to create in the Cloud portal (or via CLI/API) a Stack Job and the service will automatically deploy it for you.

New Edge Services

Finally 2 more new edge services. The Traffic Steering Policies Service and Web Application Firewall service (WAF). The WAF service is PCI compliant and provides the ability to create and manage rules for internet threats including Cross-Site Scripting (XSS), SQL Injection and other OWASP-defined vulnerabilities. Unwanted bots can be mitigated while tactically allowed desirable bots to enter. Access rules can limit based on geography or the signature of the request.

The Traffic Management Steering Policies enables you to configure policies to serve intelligent responses to DNS queries, meaning different answers (endpoints) may be served for the query depending on the logic you define in the policy. You can use the service for Global Load Balancing, fail-over, geolocation based and ASN/IP prefix steering. The service also has integration with the new Health Check service. So if instances report a ‘bad’ health check, the steering service can automatically avoid sending those instances traffic.

Hi,

Thanks for the blog writeup.

Do you have a step by step document to play around the new Edge services (both WAF + traffic steering policies) for use cases explained.

It can help to test these features for usefulness.

Thanks,

Thomas

There is a youtube video showing WAF: https://www.youtube.com/watch?v=jM4ol0_mj_c

And a great in-depth blog bost about traffic steering here: https://redthunder.blog/2019/02/26/getting-started-with-oci-traffic-management/